For seven years, if you wanted OpenAI in your product, you went through Microsoft Azure. That ended April 27, 2026 — and the implications for how businesses build with AI run deeper than the cloud logistics suggest.

ChatGPT Enterprise can now run on Google Cloud. OpenAI Codex is live on Amazon Bedrock. And a partnership once framed as the defining AI infrastructure deal of the decade has been quietly rewritten.

What the Deal Actually Changed

In 2019, Microsoft put in the first $1 billion and got exclusivity in return — all OpenAI products had to run on Azure. The April 27 amendment reversed three core terms:

First, OpenAI can now deploy across any cloud provider. AWS was designated the “exclusive third-party cloud distribution provider” for OpenAI’s enterprise platform Frontier — with a committed investment of $50 billion and an additional $100 billion over eight years.

Second, Microsoft no longer pays revenue shares to OpenAI. OpenAI’s reverse payments to Microsoft continue through 2030 but now have a total cap imposed.

Third, Microsoft’s IP license on OpenAI models shifted from exclusive to non-exclusive through 2032. This lets OpenAI grant comparable rights to Google, Amazon, or any other partner.

Microsoft remains primary partner — OpenAI products “ship first on Azure” unless Azure cannot meet technical requirements. Satya Nadella said Microsoft will “exploit” the new structure. Worth taking his word for it.

Why OpenAI Needed This

The most important number in this deal isn’t Microsoft’s $135 billion equity stake or Amazon’s $50 billion commitment.

It’s OpenAI’s projected $14 billion loss in 2026.

Despite run-rate revenue exceeding $30 billion — and more than 1,000 enterprise customers spending over $1 million annually, a number that doubled in under two months — OpenAI’s infrastructure costs still exceed revenue (PPC Land, 2026).

Multi-cloud solves this differently than most coverage suggests. When AWS and Google Cloud want OpenAI on their platforms, they pay for the privilege. OpenAI trades “shelf space” for infrastructure subsidies rather than building its own compute from scratch. It’s a capital-efficient path toward profitability — and it simplifies OpenAI’s cost structure ahead of its planned Q4 2026 IPO.

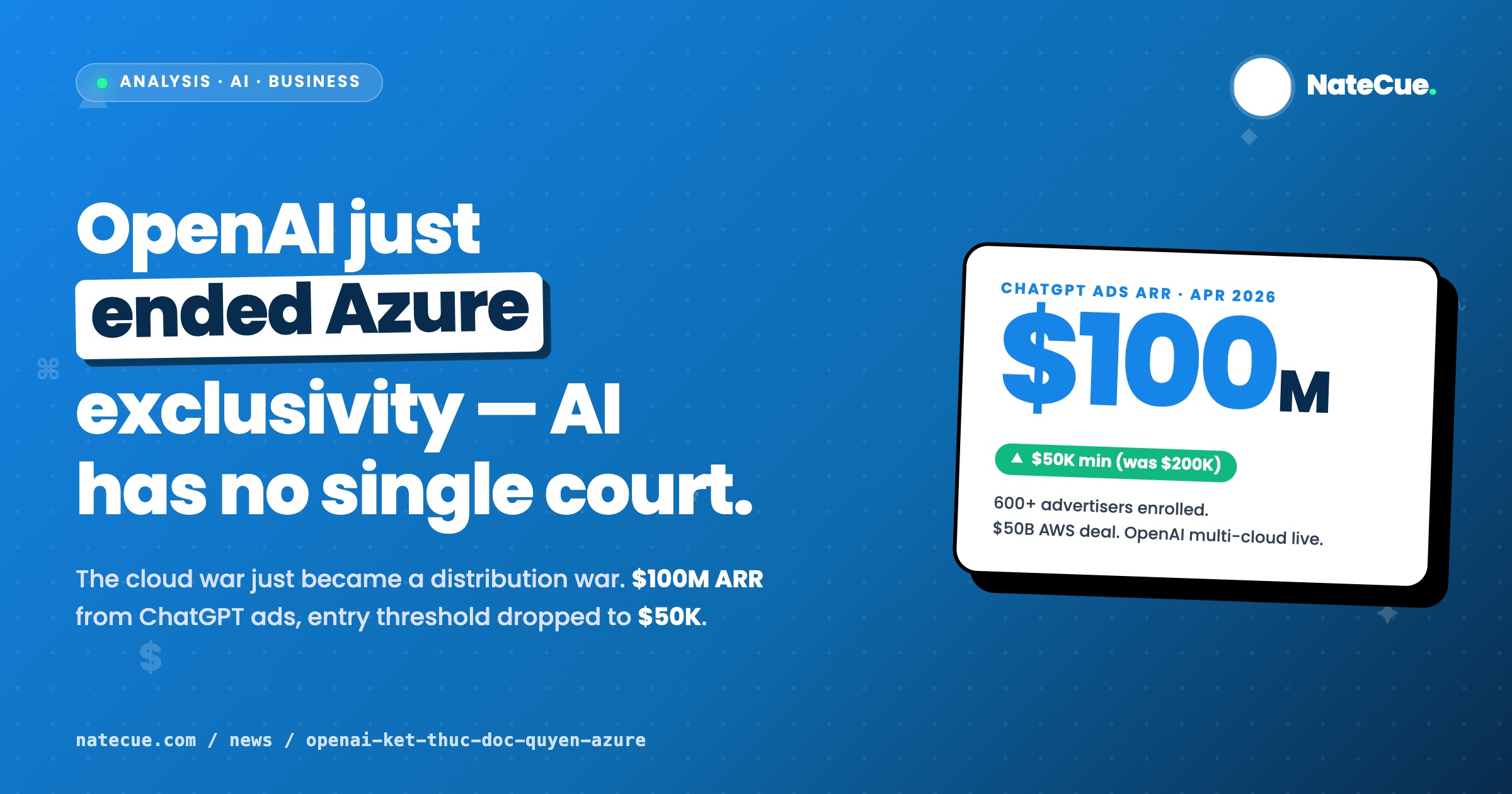

The Bigger Story: ChatGPT as Ad Platform

The part that got buried in the cloud coverage: ChatGPT’s advertising pilot exceeded $100 million in annualized revenue by April 2026, with 600+ advertisers enrolled. The minimum advertiser commitment just dropped from $200,000 to $50,000 (PPC Land, 2026).

That threshold reduction is the real signal.

OpenAI is pulling mid-market into its ad ecosystem. B2B and D2C brands that couldn’t afford the original $200K floor now have a clear path in. And when OpenAI’s infrastructure is no longer tied to one cloud, scaling that ad network globally becomes dramatically cheaper.

Multi-cloud isn’t a cloud story. It’s the foundation for an ads network that can compete with Google and Meta on distribution — not on search volume, but on intent quality. A user asking ChatGPT “what project management tool should I use” is a different (and arguably richer) signal than a keyword query. That’s the inventory OpenAI is monetizing.

For APAC and Vietnam: Three Practical Takeaways

One: GPT API pricing will get more competitive. AWS and Google Cloud are now competing to distribute OpenAI models. That competitive pressure typically flows downstream to API pricing — good news for engineering teams in Vietnam and Southeast Asia building production AI applications.

Two: AWS Bedrock is the fastest path to OpenAI in the region. GPT-4.1 and other OpenAI models are already live on Amazon Bedrock immediately following the deal. For companies already running workloads on AWS — the dominant cloud in Vietnam’s startup ecosystem — this is an immediate expansion of AI capability without switching infrastructure.

Three: ChatGPT ads at $50K is real budget territory. Regional agencies and direct brands should be testing this now. The intent signal in ChatGPT is different from any other ad platform — users are mid-task, not just browsing. G42 already signed a sovereign cloud AI agreement with Vietnam (Computer Weekly, 2026), signaling the region is being treated as serious AI infrastructure territory.

The April 27 deal marks something structural: AI infrastructure is becoming a commodity layer — like bandwidth or storage before it. The race for model exclusivity is over.

The next competition is distribution. OpenAI just bought themselves a seat at every table.

NateCue's Take

The headline says "Microsoft loses exclusivity" but that's the wrong frame. The signal that matters: ChatGPT's ad pilot hit $100M+ ARR by April 2026, and the entry threshold dropped from $200K to $50K. OpenAI isn't becoming an AI provider — it's becoming a media platform. Multi-cloud is the infrastructure move that lets them scale that ad network globally. For markets like Vietnam and Southeast Asia, where AWS and Google Cloud both have growing regional infrastructure, this matters differently than it does in the US. Local enterprises that couldn't access OpenAI natively through Azure now have a cleaner path through Bedrock or GCP — potentially with better latency and lower cost. The competitive moat in AI isn't going to be "who has the best model." It's already shifting to "who controls the distribution layer." OpenAI just bought themselves the right to compete everywhere. That's a bigger deal than any benchmark score.